Hallucinations Hallucinations

The system can generate details that sound plausible but are not true: invented facts, false citations, or inaccurate links between events and sources.

Practical response: Do not treat any answer as evidence unless the underlying source is visible and independently checkable.

False Confidence False Confidence

A smooth, confident tone can make a weak answer feel stronger than it is.

Practical response: Judge the strength of the evidence, not the confidence of the wording.

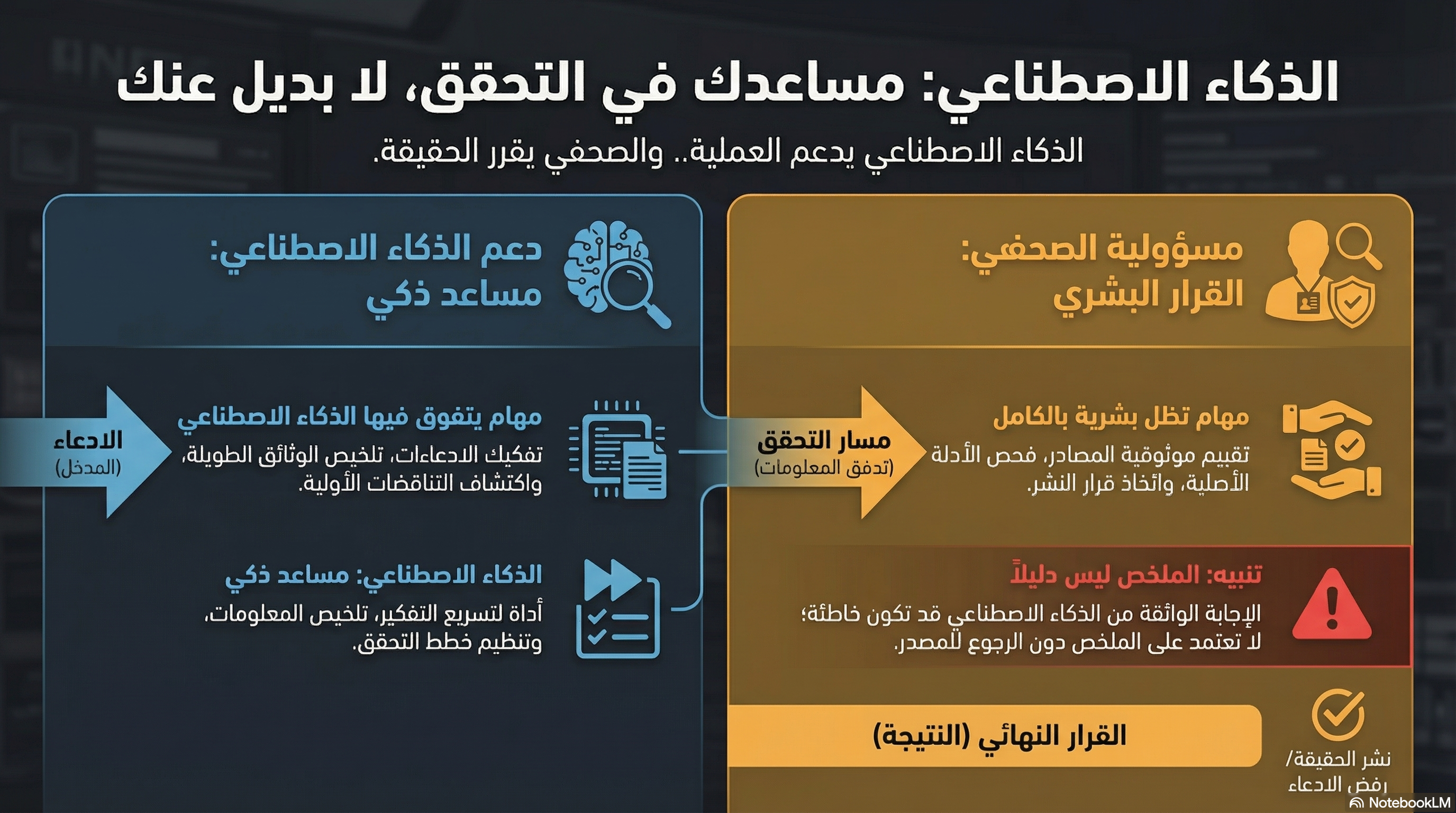

Summaries Are Not Evidence Summaries Are Not Evidence

A summary may speed up understanding, but it is not the original document, page, or recording.

Practical response: Keep the original source open, and when the source and summary diverge, trust the source.

Sensitive Data and Privacy Risks Sensitive Data and Privacy Risks

Journalistic material may include private identities, unpublished records, or details that could cause harm if uploaded carelessly.

Practical response: Check newsroom policy before uploading files, and remove unnecessary identifiers whenever possible.

Source Confusion Source Confusion

Models can mix primary sources, secondary reporting, commentary, and rumor, making the result look tidy while hiding weak tracing.

Practical response: Always ask: what is the primary source, what do we directly see, and what is still only being claimed?

Over-Reliance on AI Over-Reliance on AI

Deadline pressure can push people toward using the model as a substitute for step-by-step verification.

Practical response: Use AI early to structure the task, then return to human verification before any final judgment.

Human Judgment Still Matters Human Judgment Still Matters

Even the best tools cannot by themselves judge editorial fairness, publication sensitivity, or the full meaning of context.

Practical response: Leave publication decisions and final framing to the journalist who reviewed the evidence and context.